All health platforms ship a RAG pipeline that answers medical questions. None of them know who is asking.

A system that cannot match the answer to the patient has not solved relevance. It has automated a liability.

At a Global AI Hackathon, HPPIE (Hyper-Personalized Patient Insights Engine) was built to test whether integrating persona modeling at the retrieval stage closes the clinical relevance gap. HPPIE placed 2nd of 300+.

What is standard RAG missing for patients?

Patients receive results without the system knowing their medical history. If two users produce the same cosine similarity, they are the same patient to the pipeline.

This was described as "fragmented awareness," where a lack of persistent profiles capturing medications, allergies, or conditions across sessions creates friction. Each interaction starts cold. The patient filters the results themselves, using the clinical judgment they came to the platform to get help with.

What was the solution designed by HPPIE?

A three-stage pipeline of Context Aware Clinical RAG architecture on FastAPI, Qdrant, and Ollama, each targeting a specific failure mode.

Above: Context Aware Clinical RAG Architecture

Stage 1: Persona Modeling Layer

Before retrieval, HPPIE models a patient persona from structured clinical attributes: age, sex, active medications, diagnosed conditions, allergies, and health goals. This persona reshapes the embedding space before the query hits the vector store.

Most RAG systems treat personalization as post-retrieval reranking: retrieve top-k, then filter. The architecture rejected that because post-retrieval filtering from a 20-document pool will never surface a document ranked 47th in a generic query.

HPPIE injects the persona into the query embedding. The vector space processes a distinct query for each persona.

Stage 2: Hybrid Scoring Engine

Embedding similarity misses hard keyword dependencies in the medical domain. HPPIE integrates three components: embedding cosine similarity from Qdrant (w: 0.5), BM25 keyword matching weighted toward clinical terms (0.3), and a behavioral relevance score from the persona model (0.2).

Embeddings capture semantics. Keywords prevent clinical misses. Behavioral scoring is where the persona earns its value.

Stage 3: Local Inference via Ollama

A compliance decision, not a performance one. Dockerized Ollama models run on-prem. The moment a persona-modified query containing a medication list leaves the local network, a BAA with the inference provider is required.

The tradeoff: a 7B model will not match GPT-4 on clinical summarization. This was accepted because sending enriched patient data to third-party APIs renders the system undeployable in regulated healthcare.

What did the outcome reveal?

HPPIE pre-seeded Qdrant with curated medical data, then created summaries linked to specific personas. A 35-year-old runner querying "chest pain" received musculoskeletal content. A 65-year-old with hypertension received cardiac risk assessment.

Different retrieval sets because the query was different, not because a filter was applied after. 2nd of 300+, evaluated on AI innovation, technical architecture, clinical applicability, and production readiness.

Clinical relevance is an identity problem. The patient is a clinical context that should reshape retrieval before it begins. Pipelines that treat the patient as a downstream filter are solving the wrong problem at the wrong stage.

Where does this break?

The persona model depends on structured clinical input. Incomplete data produces a distorted persona, and the system returns confidently wrong results. That failure mode is worse than generic RAG because the patient trusts personalized output.

This remains an open challenge. A production deployment needs a persona validation layer and systematic evaluation across comorbidity complexity tiers.

Questions this work raises

Can persona-modified retrieval outperform post-retrieval reranking at scale? In the prototype, yes. At production scale with millions of documents and thousands of concurrent personas, the computational cost has not been benchmarked.

Does local inference constrain clinical utility? 7B models produce shorter summaries than 70B+ cloud models. For patient education, acceptable. For clinical decision support requiring exhaustive differential analysis, insufficient.

How does this connect to identity infrastructure? HPPIE treats a patient persona as an identity primitive that governs what the system does.

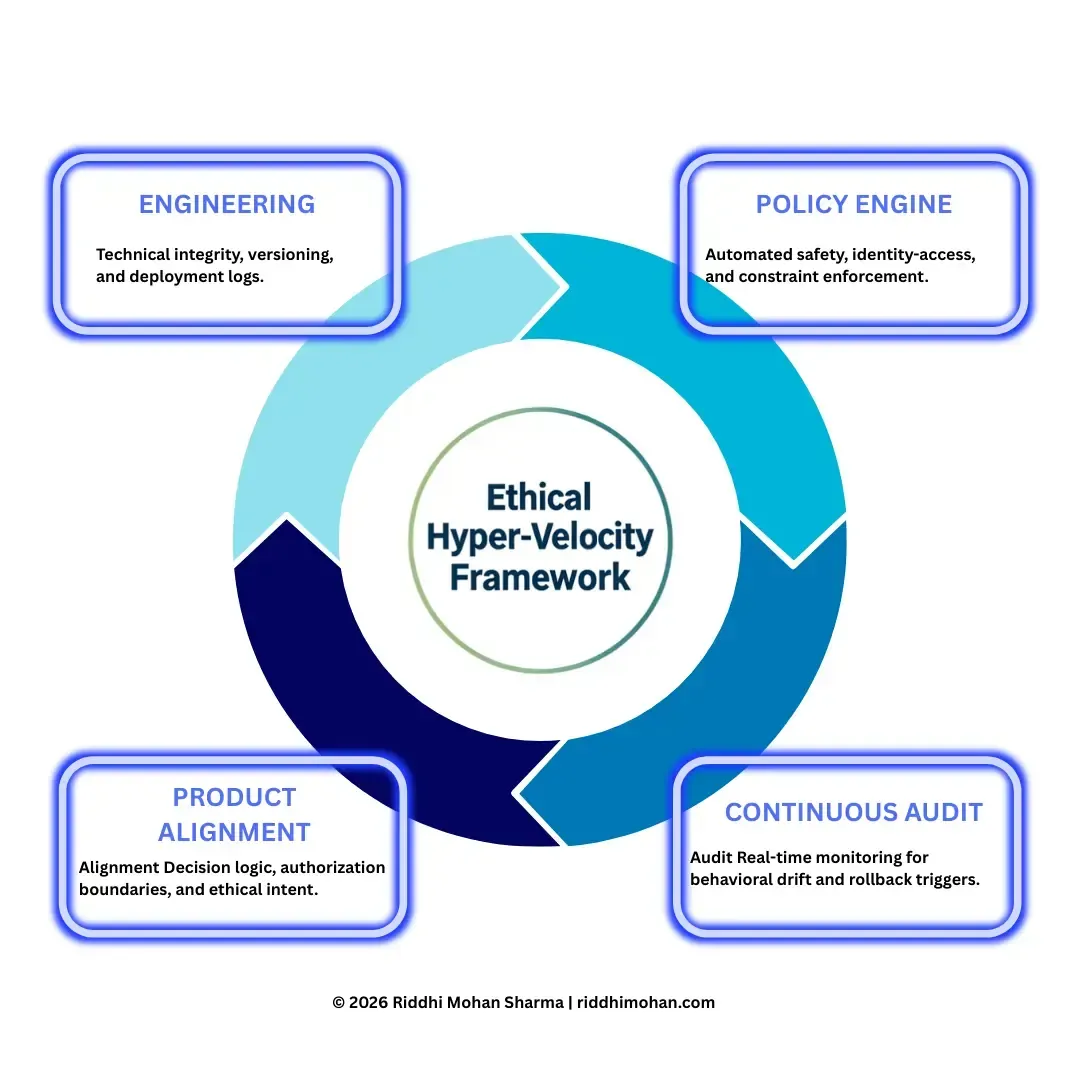

- Governance Architecture: 5 Pillars of Governance Architecture

- Technical Build: Case Study: Architecture Is Policy establishes the automated guardrails mentioned above.

- Operational Risk: Analyzing identity debt compounding in industrial-scale acquisitions.

Technical Index

| Component | Version | Status | Notes |

|---|---|---|---|

| HPPIE Pipeline | v1.0 | Active | FastAPI + Qdrant + Ollama three-stage architecture |

| Persona Modeling Layer | v1.0 | Active | Pre-retrieval persona injection into query embedding |

| Hybrid Scoring Engine | v1.0 | Active | 0.5 embedding / 0.3 BM25 / 0.2 behavioral weighting |

| Local Inference (Ollama) | v1.0 | Active | Dockerized on-prem deployment for HIPAA compliance |

Citation format: Sharma, Riddhi Mohan. "HPPIE: RAG Without Persona Modeling Fails Patient Clinical Relevance." Riddhimohan.com, March 2026. https://riddhimohan.com/blog/hppie-rag-without-persona-modeling-fails-patient-clinical-relevance

Version history: March 29, 2026: Initial case study published. Expanded from a Global AI Hackathon entry (2025).

Cite This Work

Formal Academic Reference

"Sharma, Riddhi Mohan. (2026). HPPIE: RAG Without Persona Modeling Fails Patient Clinical Relevance. riddhimohan.com, March 29, 2026. /blog/hppie-rag-without-persona-modeling-fails-patient-clinical-relevance"

This research is open for academic citation and peer-review. Established to support the advancement of AI Governance and Industrial Ethics.

Related Insights

Identity Debt Compounds: What 12 Healthcare Acquisitions Taught Me About Day One

Identity integration starts post-close. That is not the problem. The problem is whether the platform was built for serial acquisition before the first deal closed.

Architecture Is Policy: Compiling Governance into the AI Stack

Building this portfolio offered a live use-case of Ethical Hyper-Velocity. The focus is on a three-tier governance architecture that manages the automation of pre-build guardrails pertaining to consistent, reliable standards, performance budgets, and the professional integrity of the builders.

Ethical Hyper-Velocity (EHV): Compiling Governance into the AI Inference Stack

EHV is not a 'policy framework' but a Governance-Aware JIT Compiler that eliminates the 'Governance Latency' inherent in ISO 42001 and human-in-the-loop audits. By compiling governance directly into the inference stack, we move from reactive compliance to proactive, sub-millisecond enforcement.

Neuro-Prediction Pivot: Why AI-to-Noise Beats Surgical Scale

The next era of neurotechnology is not about surgical restoration, but preemptive prediction. Non-invasive BCIs, powered by deep learning, offer a safe, scalable pathway to a ~$6B market, shifting the focus from treating paralysis to forecasting and managing neurological and mental health conditions.

Riddhi Mohan Sharma

Engineering Leader. Global Identity Architecture. M&A Technology Integration. AI Strategy.

Engineering Leader specializing in Global Digital Identity Architecture and M&A Technology Integration. Track record across multi-million dollar P&L, AI strategy, healthcare compliance (GDPR/HIPAA), and Identity platforms scaled to 3.5M+ users.

Framework Attribution

Disclaimer:The views, frameworks, and architectures presented here (including Architecture Is Policy / Ethical Hyper-Velocity and HPPIE) are my personal thoughts and original syntheses. They are inspired by and draw lessons from my broad enterprise-scale research and experience in healthcare identity, M&A integration, and AI governance. They do not represent the views, policies, or practices of my employer and are not based on any specific proprietary information, internal systems, code, metrics, or confidential details from my current or past roles. All examples and implementations are generalized or self-hosted on this personal site.